A small selection of various other artistic and creative projects I’ve been invovled in.

Conceptronica (2019)

with Charles Holbrow and Manaswi Mishra

An attempt at generating reviews and album art automatically from audio using neural networks. Uses MARD from MTG. Finetunes a language model and prompts it with words derived from audio features, and conditions a GAN on audio features, with very limited success (but virtually unlimited amusement). Done as a project in MAS S68 Fall 2019. Code and samples forthcoming, but for now a review snippet (doesn’t match the input much, but quite fun to read I think):

This cd does not just put you in the mix. It makes you think about life, take you on a wonderful ride, meditate on this, get you down in this and… you’ll do it! I love this cd, and thank you Amazon for having the amazing talent and variety on this cd.Just how great Moon Records are for making great albums and for being able to get them on CD is also one of the reasons why I liked this disc the most. The fact that this could be a cheaper import CD just makes it even more enticing. There are no liner notes or anything but just short bio on the band. A great addition to anyone’s Moon Records library.As for Moon Records, they are a veteran outfit led by the great voice of John Cage who is too busy doing remakes and re-recordings to mention so I’ll focus on their records. Luther Vandross had the Masterpuppets in 1970 and Led Zeppelin, Paul Butterfield was the real genius of the 60’s, “Byronic” was the marriage of Miles Davis and Bird with Chick Corea. Roy Orbison was their self-tourmaster of the 1970s. from the 70’s Paul Vandross was an unhappy man who went through the 90’s and Ray Charles to the advent of the 90’s Ray Barrett Crudupnick, Peas was the jealous master of the wheel. would pull together. together, Lennon broke up his Red Hotdue to his story with Bird was the 60’s with Mel Lewis & an original with George Jones and the late 60’s African Wind and Roy Eldridge had two stellar albums. had a great sides from Moby and Muddy Waters, Buggie from Golden soulful The Flies. Terence Crandell played a bit while Beck caught a back in his rock break thru Blues Kansas City with Slim. These are recorded in the mid 70’s Funk House era.

The AudioObservatory (2019)

A system that takes a large number of audio files, analyzes them for a number of feature trajectories, computes distance matrices based on these features (using dtw for example), and then creates a weighted combination of these to use in embedding the audio content in a 3D space. This combination can be updated to match user interest, resulting in a reorganization of the material It also defines a number of interactions to explore the space, including building probabilistic paths to guide the user navigation based on the user’s choices, and provides multimodal input methods (e.g. face-tracking, LeapMotion, MIDI controllers, etc.) to quickly have local and global access to sounds within the source collection.

Compiler Poetry (2019)

Generating poetic compiler messages. Assembled C++ error and warning messages from here, and put them together with some excerpts of Romantic poetry. Done as part of a homework assignment in MAS S68 Fall 2019. The (very small) dataset is here.

Examples beginning with “The”:

- The water cannot have a near class from the parameters

- The land feature to the same type the walks

- They handler must past: Subblics

- The coonthing born is not an end of setter;

- The was worst though flowers handlefer clan

- The design daacking list address too large

Scream (2018)

Scream (2018) is an audiovisual installation that uses face-tracking as an input to control an instrument built from the audio portion of The Ryerson Audio-Visual Database of Emotional Speech and Song (RAVDESS) by Livingstone & Russo (licensed under CC BY-NA-SC 4.0). Facial features and gestures are used to construct textures from the database using Corpus-Based Concatenative Synthesis. This work is part of an ongoing series exploring artistic applications of audio datasets, bodily interfaces to recorded sound, and new visual and physical interfaces to the exploration of sound corpuses.

Scream uses perhaps the most primal sound-making device, the mouth, in a silent, visual capacity. It creates a one-to-many mapping in terms of people, allowing the gestures of one person to be expressed through the voices of many. The installation’s title doubles as an instruction, encouraging participants to scream, for whatever reason they may want to, through the voices of others without needing to make any sound acoustically.

NYC Sounds (2018)

A visual interface to explore sounds aggregated from the internet that have been geotagged. Uses granular synthesis and maps street length to grain length.

My roles: Software Development, UX Design

Schoenberg in Hollywood (Tod Machover Opera) (2018)

Schoenberg in Hollywood is an opera by Tod Machover that premiered in November 2018, with four performances by the Boston Lyric Opera. I worked on the live vocal mix as well as assisted with the recording, which I later mixed for archival.

Press: The Boston Globe, WBUR

ObjectSounds (2018)

ObjectSounds uses object detection as an interface to sound exploration. It uses detected object names to search Freesound.org and retrieve arbitrarily selected but related sounds recorded by other users. It is intended to offer an expression of the latent sonic potential in a scene. Demo video to come!

FreesoundKit (2018)

FreesoundKit is a Swift client API for Freesound.

A Rose Out of Concrete (Boston Conservatory Ballet) (2018)

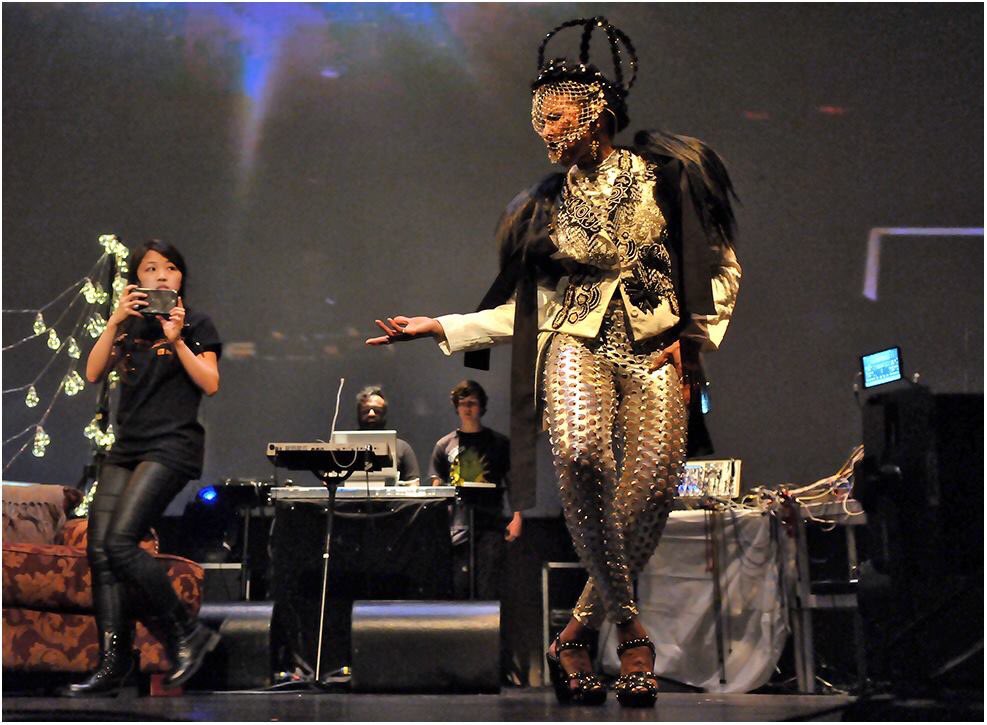

A Rose Out of Concrete is an “Afrofuturistic multidiscplinary” dance project by Nona Hendryx, Hank Shocklee, Duane Lee Holland Jr., and Dr. Richard Boulanger as part of the Boston Conservatory at Berklee’s Limitless show (April 2018). I worked on various aspects of the show’s technology, co-leading a team of students.

Video:

Press: The Bay State Banner

MusicianKit (2017)

MusicianKit provides a simple API for musical composition, investigation, and more. It doesn’t intend to produce audio, but to provide tools with which to construct and work with musical ideas, sequences, and to gather information about the ways in which musical iOS apps are being used through a kind of distributed corpus analysis, where the corpus is a living collection of music being made by iOS musickers.

csPerformer (2017)

csPerformer was my undergraduate thesis project in the Electronic Production and Design department at Berklee. Essentially, it’s a prototype for a system that is intended to facilitate composition through performance, or composition in a performative manner. It does this by allowing you to sing, play, or otherwise make pitches and sounds into the system to give yourself materials to compose with in real-time. In addition to acting as compositional interface for performers, it is intended to bring physicality to compositional actions, or to allow compositional decisions to be expressed physically.

Soundscaper (2017)

Soundscaper is an approach to placing and distributing music and sound in physical space in a way that can be interacted with, using augmented reality.

It presents a model, inspired by interactions more common to the visual arts, for musical and multimodal composition, as well as for user interactions with music, sound, and space that extend contemporary practices in these media. It is spatialization not in the sense that the origins of sound are distributed in space, but that the experience of them is instead.

Twitter Ensemble (2017)

The Twitter Ensemble is a generative musical system that interprets tweets related to music in real-time.

The Sound of Dreaming (2017)

The Sound of Dreaming is a musical-technological-theatrical work written by and starring Nona Hendryx and Dr. Richard Boulanger. In it, a researcher seeks to steal the voice of a celebrated singer and infuse his machines with it, in order to become invincible, and a variety of ideas are explored along the way. It has been presented twice, once at Moogfest in Durham, NC on May 19 2017, and three months later at Mass MoCA’s Hunter Center as part of Nick Cave’s Until in North Adams, MA on August 19, 2017.

Berklee Music Video: Kidzapalooza (2017)

As part of an interactive audiovisual experience designed for children, to be presented at Kidzapalooza (at Lollapalooza Chicago) 2017, I designed and built a generative musical system that allowed children to play convincingly in a few popular styles of music using a number of hardware interfaces.

The visual and lighting system, and the concept, are by Blake Adelman.