Non-photorealistic Camera: Depth Edge Detection and Stylized Rendering using Multi-Flash Imaging

Ramesh Raskar, Kar-han Tan, Rogerio Feris, Jingyi Yu, Matthew Turk,

Appeared in ACM SIGGRAPH 2004, August 2004

|

Depth Edge

Detection and Highlighting using a Multi-Flash Technique, 2002

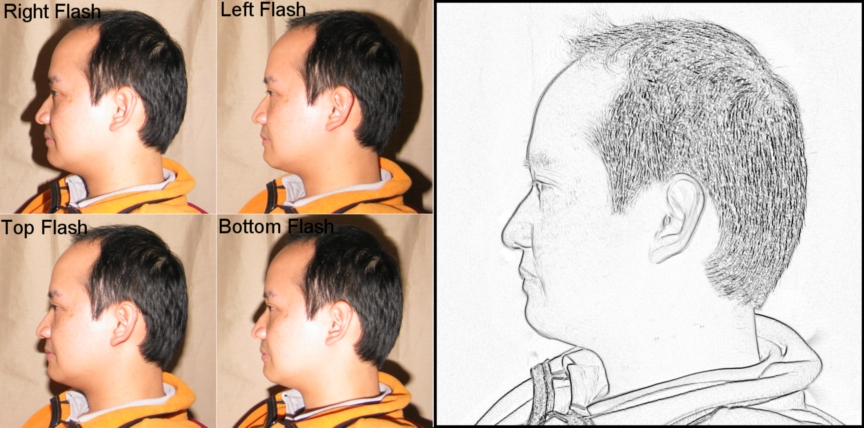

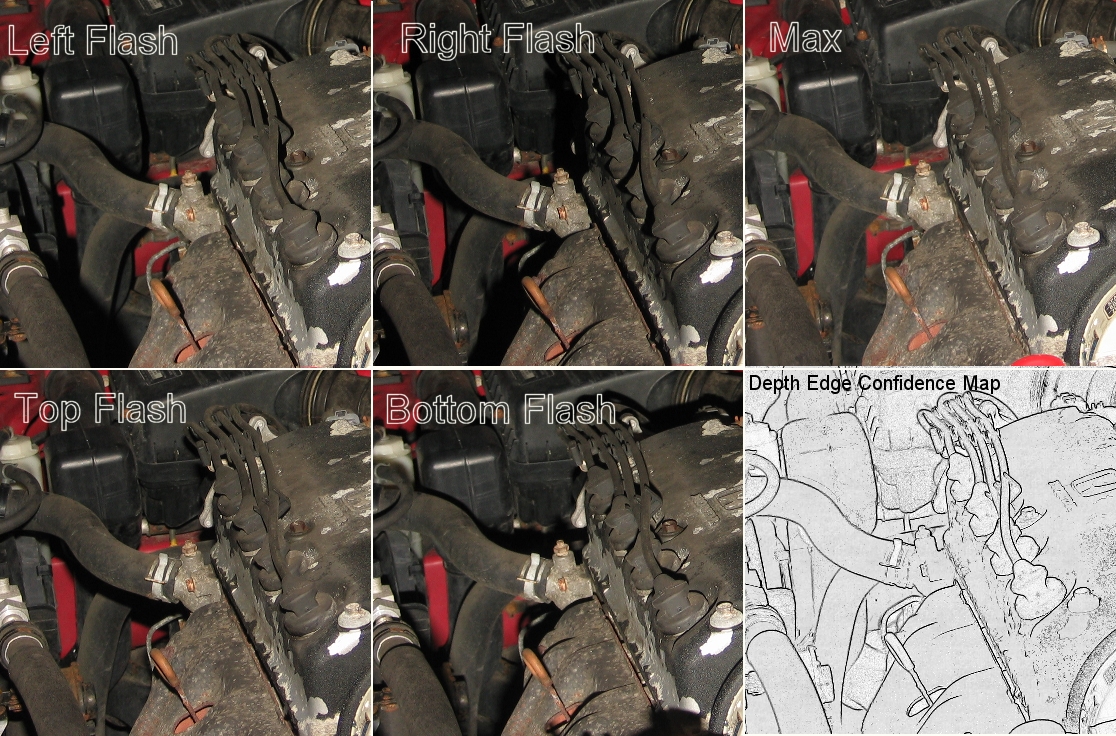

Imagine a camera, no larger than existing digital cameras, that can directly find depth edges or create stylized images. As we know, a flash to the left of a camera creates a sliver of shadow to the right of each silhouette (depth discontinuity) in the image. We add a flash on the right, which creates a sliver of shadow to the left of each silhouette, a flash to the top and bottom. By observing the shadows, one can robustly find all the pixels corresponding to shape boundaries (depth discontinuities). This is a strikingly simple way of calculating depth edges. Below, we show one potential application in generating NPR images. Watch a short movie (12MB). |

| Abstract We present a non-photorealistic rendering approach to capture and convey shape features of real-world scenes. We use a camera with multiple flashes that are strategically positioned to cast shadows along depth discontinuities in the scene. The projective-geometric relationship of the camera-flash setup is then exploited to detect depth discontinuities and distinguish them from intensity edges due to material discontinuities. We introduce depiction methods that utilize the detected edge features to generate stylized static and animated images. We can highlight the detected features, suppress unnecessary details or combine features from multiple images. The resulting images more clearly convey the 3D structure of the imaged scenes. We take a very different approach to capturing geometric features of a scene than traditional approaches that require reconstructing a 3D model. This results in a method that is both surprisingly simple and computationally efficient. The entire hardware/software setup can conceivably be packaged into a self-contained device no larger than existing digital cameras. PDF

| Movie

| Powerpoint

Slides from Siggraph Presentation | Laparoscopic Camera

| Other

Projects

| Paleontology

| Photo.Net

article

| Slashdot

Discussion

| Blog1 Blog2

Blog3

|

Related Siggraph 2004 Activities and Publicity

Emerging Technologies Booth showing real-time demonstration (The 'A-ha: Take on me' demo)

Siggraph 2004 cover

Paper Presentation, Wednesday 3:45pm

E-tech Presentation, Monday 10:30am

Highlighted Paper

Highlighted Emerging Technology activity, (More)

Electronic Theater (conference video highlight)

Student Poster (Specularities)

Related Papers

A Non-Photorealistic Camera: Depth Edge Detection and Stylized Rendering with Multi-Flash Imaging Ramesh Raskar, Karhan Tan, Rogerio Feris, Jingyi Yu and Matthew Turk ACM SIGGRAPH 2004

Also accepted for Siggraph Emerging Technologies , 2004

Exploiting Depth Discontinuities for Vision-Based Fingerspelling Recognition, Rogerio Feris, Matthew Turk, Ramesh Raskar, Karhan Tan and Gosuke OhashiIEEE Workshop on Real-time Vision for Human-Computer Interaction (in conjunction with CVPR'04), Washington DC, USA, 2004

Specular Reflection Reduction with Multi-Flash Imaging Rogerio Feris, Ramesh Raskar, Karhan Tan and Matthew Turk, IEEE Brazilian Symposium on Computer Graphics and Image Processing (Sibgrapi'04), Curitiba, Brazil, 2004

Shape Enhanced Surgical Visualizations with Multi-flash Imaging, Karhan Tan, Rogerio Feris, James Kobler, Paul Dietz and Ramesh Raskar, International Conference on Medical Imaging Computing and Computer Assisted Intervention (Miccai'2004), France, 2004 (More)

Discussion of the method, Source and Images, Slides plus details, PDF Sketch, More Projects

Imitating Sketching

|

Source

Image

|

Output

Image (Texture-removed shape

clarifying Rendering)

|

|

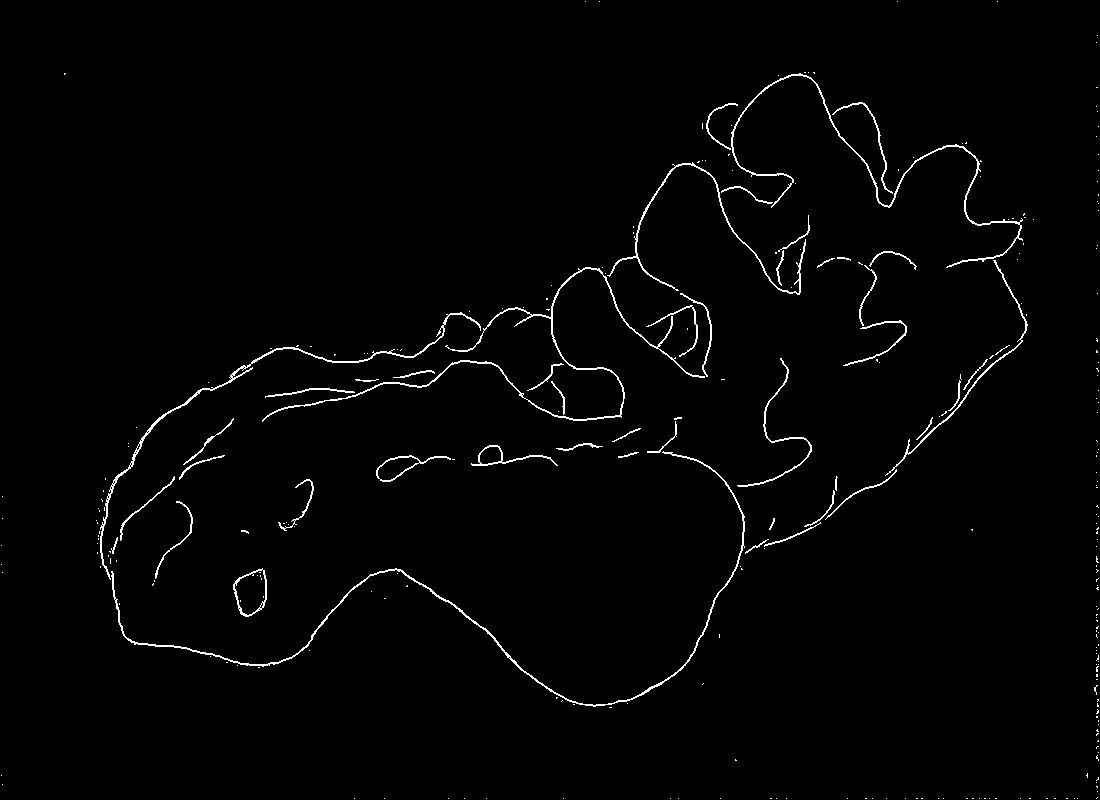

Raw Depth

Discontinuity Confidence Map created by Multi-Flash Camera

(Unprocessed results) Notice the individual leaves now clearly visible |

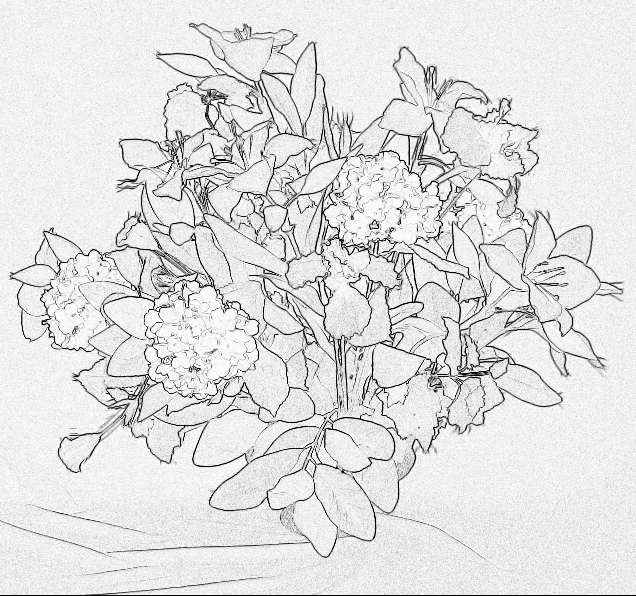

| Source image |

Texture-removed

shape clarifying image |

Unprocessed

raw depth edge confidence map |

|

|

|

|

|

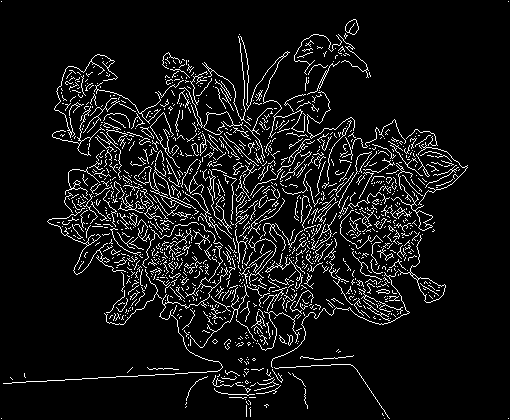

| Histogram

equalized |

Gamma

improved (3.0) |

Canny edge

detection (sigma=1) |

Canny edge

detection (sigma=2) |

|

|

|

|

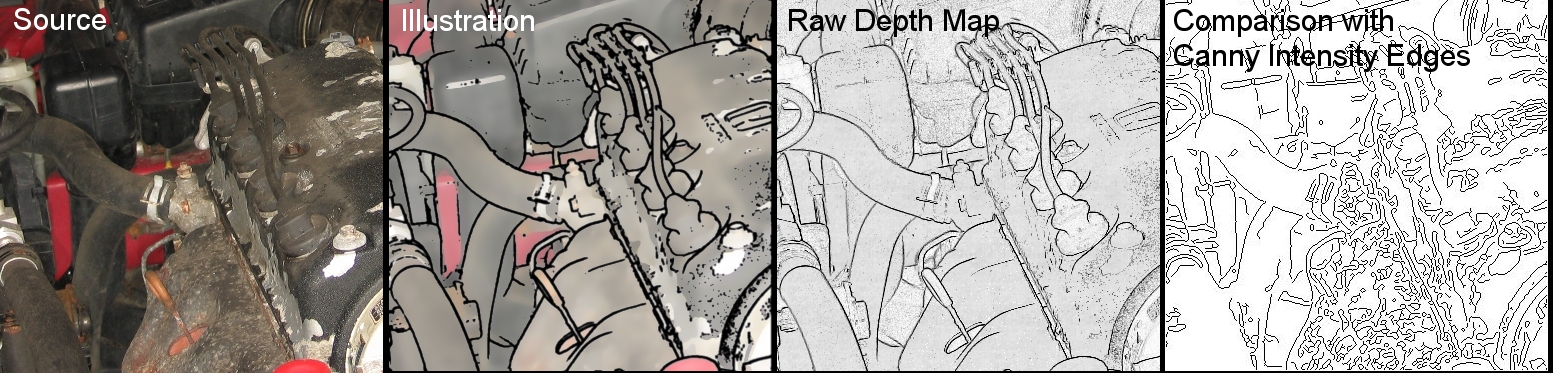

Notice the four spark plugs, dip

stick, shape of engine next to dip stick, and the Honda sign now

clearly

visible in this shape clarifying image.

However, the disadvantage, common to NPR techniques, is that some

texture detail is lost.

Source Image

Raw Result of Our Method for Depth Edge Detection (with no post-processing)

One type of rendering

Comparison with Intensity Edge detection (Canny) results

More Canny Edge detection results for this dataset

Source Image

Raw Result of Our Method for Depth Edge Detection (with no post-processing)

One type of rendering (texture de-emphasis)

Comparison with Intensity Edge detection (Canny) results

More Projects

| We were surprised by

the simplicity of our depth edge detection solution. We found it

difficult to believe that, while photometric stereo techniques solved

the more difficult problem of computing surface orientation (and

indirectly the depth values), procedures to directly find the depth

discontinuity were rarely explored.

Hence, after developing

this technique in 2002, we discussed with various researchers at CVPR

2003 (where it was also shown as a demo), ICCV 2003 and Siggraph 2003

(where the basic ideas of depth edge detected were presented as a

sketch) and asked for feedback. We concluded that, one possible reason

this type of strategic placement of light sources very close to the

camera was overlooked is that, in-fact for most photometric stereo or

shape-from-shadow or shape-from-shading technique, the flash

configuration is a failure case. However, beyond our search and

discussion with researchers, it is quite possible that a approach

similar

to ours was explored in 60's or 70's. So we asked researchers who have

been

active since 70's who refered us to papers that analyzed intensity

edges

in images but those techniques required widely varying

illumination on objects with uniform albedo. For most techniques to

compare with, a

simple question to ask is : Will the method find a depth edge for a

white piece of paper in front of a white background ? |

More data sets

More Computational Photography Projects

More Projects